I’ve heard some newbies and even not-so-newbies wonder about what to do in 1:1 meetings with their managers. As a result, I gave some advice, and I listened to others’ advice. After collecting some thoughts, here’s the not-so-short overview…

The Easy Stuff

Company Inquiries

Ask for clarifications or details on the company’s roadmap. How’s the business side of things? How is the engineering dept progressing towards meeting roadmap goals? How is the roadmap changing? What factors might cause it to change?

Keep in mind that this topic depends on your company’s size. A smaller company means your manager is more likely to have more knowledge about the company’s direction and decision-making.

Team Improvement

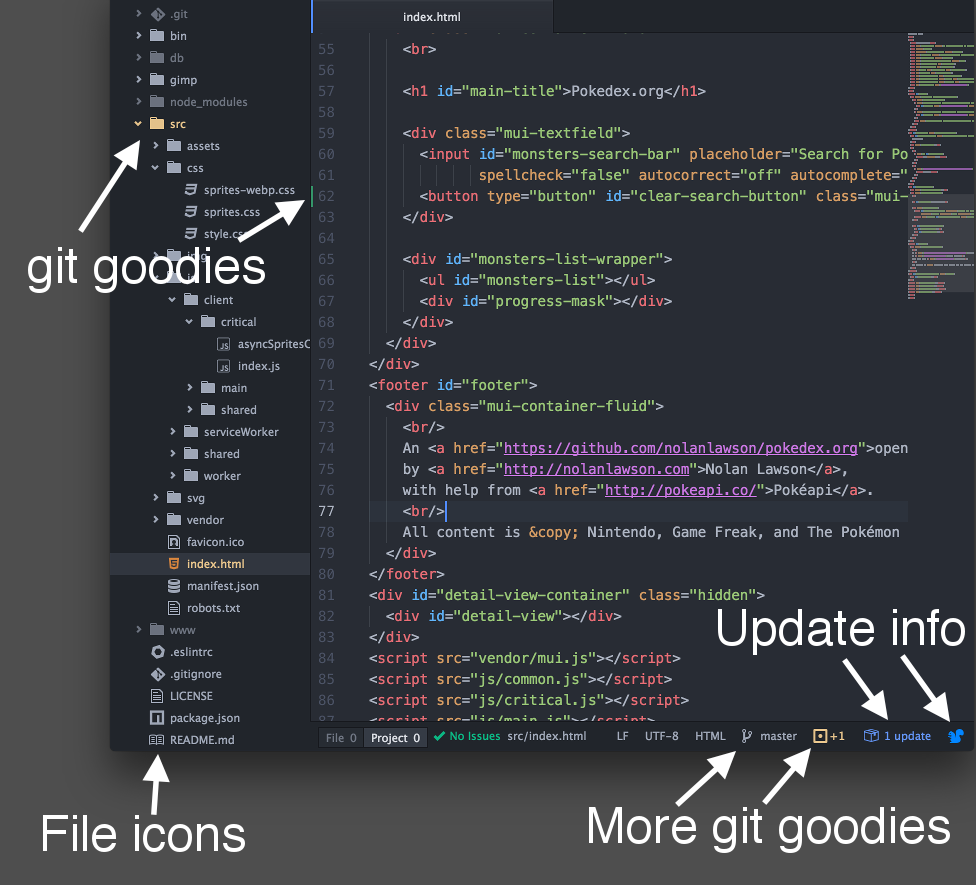

Bring up any pain points. Talk about possible improvements in company processes (PTO, food, etc) or technical process (git practices, code reviews, hiring, dev environment, deployment, tech stack).

Self Improvement

How can you improve your value to the company? Are there any upcoming training opportunities. What do you want to learn? What does the company want you to learn? What can the company help you learn via training classes and conferences?

The Tough Stuff (Get Feedback!)

But make try to get specific feedback. Asking “How am I doing?” is imprecise, and therefore, it could be less helpful. Unless you’re screwing up something big, most managers probably don’t have a solid response ready (they’ve got other things on their minds –like other meetings they have that day). More precise: “What skills should I focus on? How can I help more? Are there new projects in the roadmap that I can really add value to? What goals does the company has for my role?”

Anyway, one of the biggest things I focus on in my 1:1s is training/learning opportunities. For example, “What’s the company’s training budget/policy? Is there any training I could take that would benefit the company directly? What about training that I’m personally interested in? Can I go to conference A, B, and/or C? Can I attend workshop D, E, and/or F? There’s a meetup I want to attend, but I’d have to leave work early every Tuesday; is that ok?”

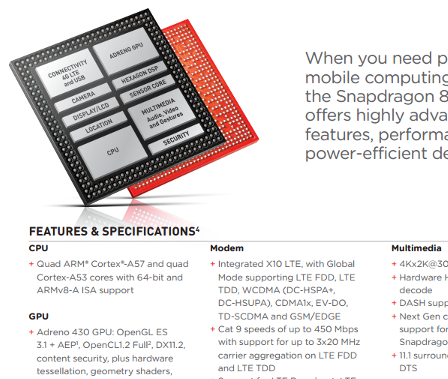

I also like to discuss new hotness. For example, “I’ve been interested in technology X; can I give a presentation to the team about it even if we can’t use it any time soon? Can we possibly start using it?”

And suggestions for process improvements. For example, “Here are my thoughts re: onboarding, hiring, story planning, etc”.

Sometimes, I discuss team issues (personnel or process). For example, personnel issues: “Person X tends to interrupt me a lot. I’ve tried A, B, and C. So things have gotten better, but there’s still room for improvement. Can you help?” For example, process issues: “We need a better convention for git branching/merging.”

After the first 2 or 3 1:1 meetings, a decent chunk of time is dedicated to just reflect on the topics brought up in the previous meeting.

You can also talk about a lot of initiatives outside of the day-to-day. You can express desire to: spearhead company culture stuff, organize team events, be a mentor, start an internship program, organize meetups, volunteer at recruiting events, etc.

So I guess it’s a mutual feedback session: I want to discuss ways for me to grow + ways for company/team to grow.

Less directly, it’s a good way to see if your interests are still aligned with the team. For example, through the 1:1s, if you express concerns that are never addressed or if you express desire to work on something but you never get the opportunity –then the 1:1s help you learn it’s time to switch teams or even jobs :(

The Bonus Stuff (Get Perspective)

Ask your manager about their experiences. How many meetings do they attend on a typical (and atypical) week? What goals do they have for themselves? What do they like/dislike about the job? What did it take for them to achieve their own career goals?

You gain a lot of insight by learning about your manager, their perspectives, and their typical day (outside of directly interacting with you and your team). You gain insight on what it’s like to be in a formal leadership role, how content/stressed your manager is (look out for burnout), etc. This insight can help you better approach your manager with new ideas and even help make sure there are no major signs that the manager might be unhappy at the company. If the manager leaves, that could have a huge impact on your job.

What NOT To Do

It’s not a status meeting, code review session, etc.

This isn’t a time for simply recapping “so what have you been working on?” If your manager doesn’t already know the answer to that question, that’s a bad sign or your organization isn’t properly structured to give your managers the time/opportunity to understand direct reports’ work.

In other words, the 1-on-1 shouldn’t just be used as a more in-depth SCRUM/status meeting.

I’ve also heard that 1-on-1s could be used for in-depth code reviews, but if your organization doesn’t do proper code reviews as part of the usual process of development, that’s another bad sign.

Remember: Have a Plan and Get Takeaways

Go into the 1-on-1 meeting with some kind of agenda, plan, questions, etc. Don’t just go into the meeting empty handed.

Based on the conversation, take some notes. Use them as reference, but also use them to track your own progress towards meeting any goals or action items discussed in previous meetings.